Click here to go see the bonus panel!

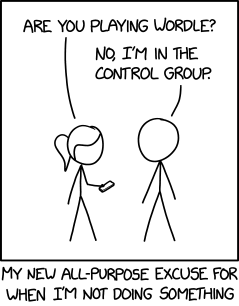

Hovertext:

I'm guessing the mirror of Erised is in a lot of erotic fanfiction, but I refuse - REFUSE - to learn if I'm right.

Today's News:

Click here to go see the bonus panel!

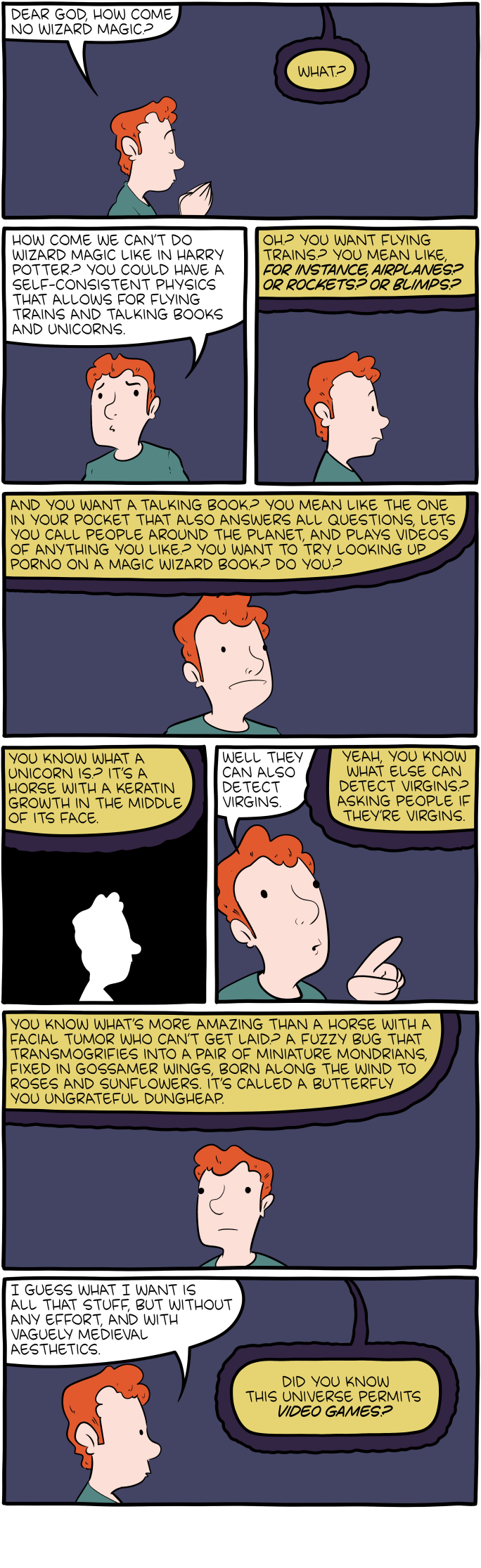

Hovertext:

If you hear someone try to save before attacking you, run.

Today's News:

Click here to go see the bonus panel!

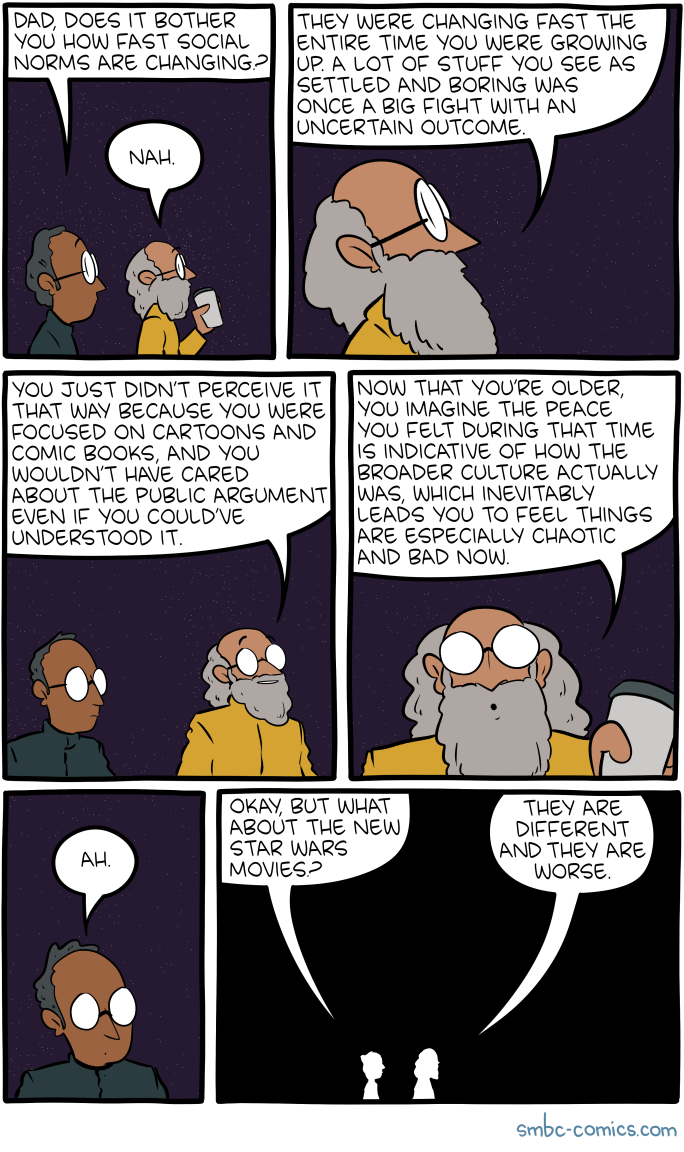

Hovertext:

Looking it over now I'm very worried about the coffee in that cup.

Today's News:

Click here to go see the bonus panel!

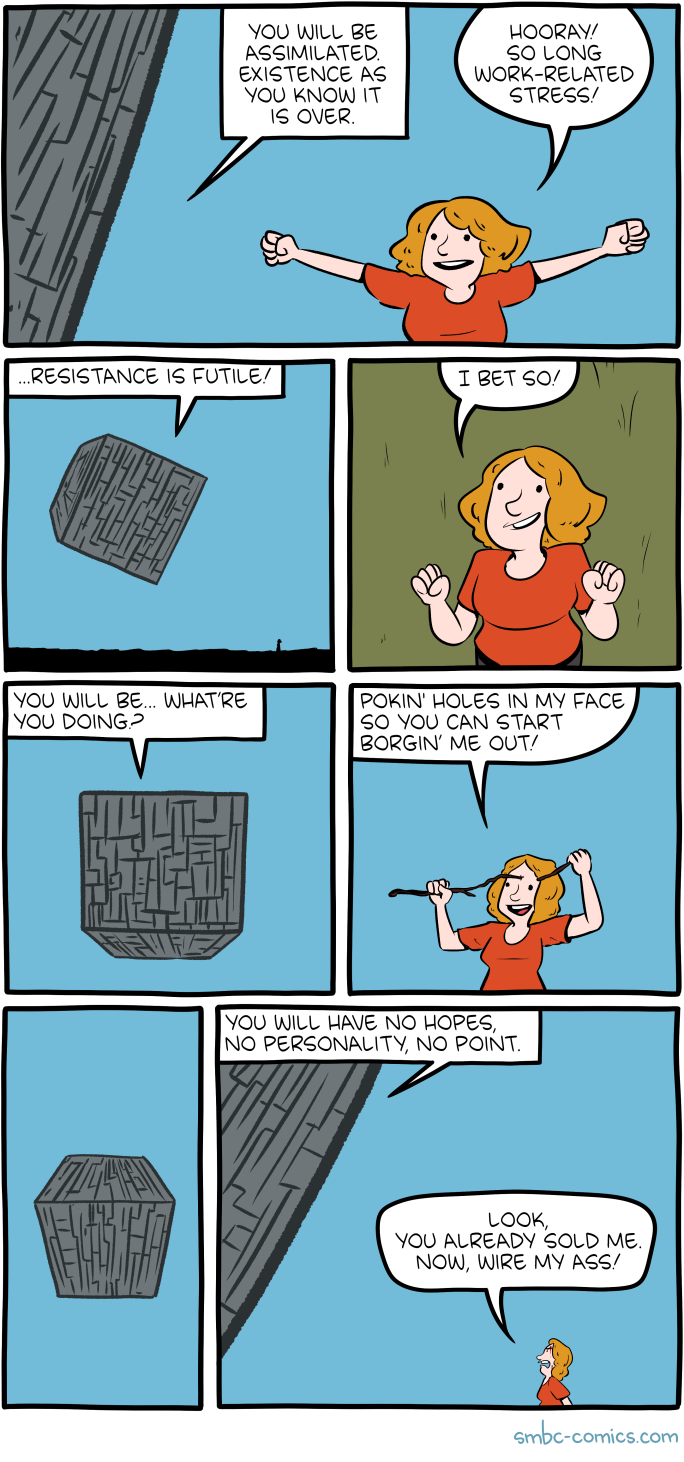

Hovertext:

Borging is my contribution to English verbs.

Today's News:

Click here to go see the bonus panel!

Hovertext:

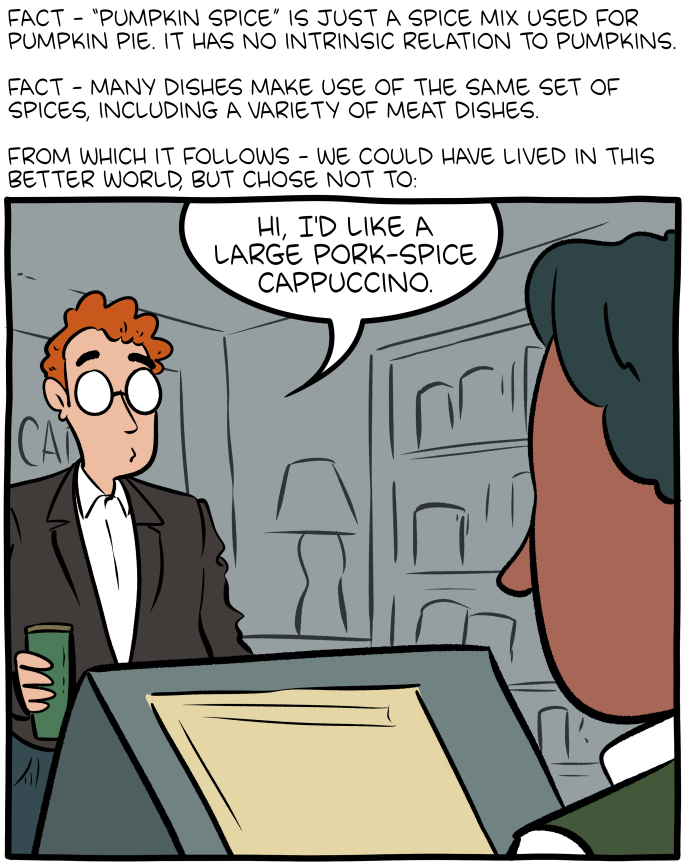

With a dab of pork-cream on top, if you please.

Today's News:

Next Page of Stories